The ‘Low Friction No Cost’ AI Inference Service for Research That You May Not Know About

Inside the Argonne ALCF platform that's already processed 11 billion tokens for researchers.

Would you believe that a no-to-low cost inference service already exists for the scientific research community? I recently ran across a webinar from December from Argonne National Laboratory (webinar slides | website) about their no-to-low cost federated AI inference service. It has been running since 2024, has served ~220 users, processed ~10 million requests, and generated over 11 billion tokens1!

Not only is it is open to the scientific community*, but it costs zero dollars per token. AND it hosts LLM, VLM, and embedding inference endpoints that live on dedicated nodes that avoid HPC queues and auto-scale. It also provides both API and OpenWebUI (open source ChatGPT) experiences. Since it relies on Globus Auth, many faculty are probably already technically authenticated to use it with an approved allocation2.

*The Catch

Ok so there is a little bit of friction and cannot just log in and start blasting through tokens. You need an allocation first. However, they do have a Director’s Discretionary (DD) Allocation designed for “startups” and getting code running. It doesn’t require DOE sponsorship. It’s the “let me just try this out” path of least resistance before applying for major awards.

Once your DD proposal is approved, you will receive the Project Short Name and Principal Investigator (PI)details necessary to finally complete the account registration process.

The account activation and requirements FAQs are helpful, but if you want to skip to the Getting Started portal, the documentation is fairly robust and straightforward: https://www.alcf.anl.gov/get-started.

Here is what you will need for the form:

Principal Investigator (PI) Name: The name of the project lead who will act as your “Sponsor” and approve your request.

Project / Allocation: You have to apply and be approved for this before the account is created.

Institutional Email: Use your .edu or .gov address (not Gmail/Yahoo) to avoid delays.

ORCID iD: You are required to link your ORCID account, so ensure you have your login credentials ready.

Legal Name & Citizenship info: You must accurately declare your citizenship status (passport)

Curriculum Vitae (CV): A PDF copy of your CV is often required to be uploaded directly during this step. (for non-US Citizens)

Token Preference: You will need to choose between a Mobile Token (app-based) or a Physical Cryptocard. - kind of like Duo.

More about the service

They are running this service on their Sophia cluster (24 nodes of NVIDIA DGX A100s) and the Metis cluster (SambaNova SN40L systems).

Sophia uses vLLM and supports the full range of OpenAI-compatible endpoints including chat, completions, embeddings, and batch processing. (available models)

Metis uses SambaNova’s inference API and currently supports only chat completions. (available models)

There are two more clusters coming soon...possibly Solstice and Equinox….which feature tens do thousands of NVIDIA Blackwell GPUs.3

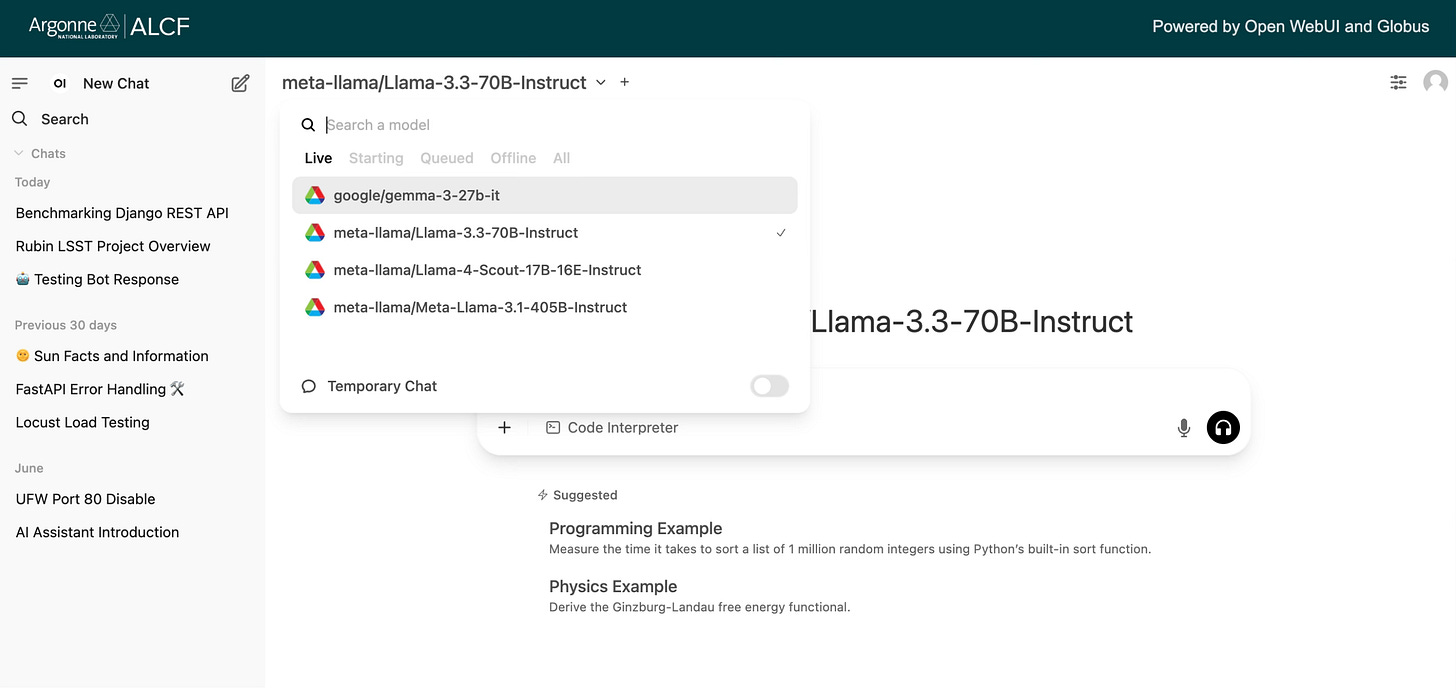

As stated earlier, there is a web interface (based on OpenWebUI) that looks like ChatGPT at https://inference.alcf.anl.gov. It provides multi-model chat access, RAG. agent capability, and all that you would expect from an AI chat interface these days. But the real fun and power is the programmatic access. The API is OpenAI-compliant. That means your researchers don’t need to rewrite code….just change the base_url in their Python script. So you could easily use it alongside the code you might have written with your on campus Portkey, LiteLLM, OpenRouter, or campus approved AI cloud APIs.

from openai import OpenAI

from inference_auth_token import get_access_token

# Get your access token

access_token = get_access_token()

client = OpenAI(

api_key=access_token,

base_url="https://inference-api.alcf.anl.gov/resource_server/metis/api/v1"

)

response = client.chat.completions.create(

model="gpt-oss-120b-131072",

messages=[{"role": "user", "content": "Explain quantum computing in simple terms."}]

)

print(response.choices[0].message.content)And this is just the tip of the iceberg, these inference services join all the other services and GPU power on the clusters. Some interesting examples they highlighted from the webinar:

Agentic Workflows: They showed off some real work with ChemGraph. This is an agentic workflow where the LLM acts as a router, calling out to Python libraries like RDKit to actually simulate molecular properties4.

AuroraGPT (site): This is a model fine-tuned on scientific literature.

Batch vs Real-Time inference: The inference endpoints are not just for chat and real time completions. Argonne has built a Batch API specifically for use cases when you want to dump a file with 150,000 requests and let their A100s chew through it.

All the other services: LLMs are only a small fraction of what these clusters offer for science. And some exciting upgrades are planned over the next few years due to public-private partnerships.

As with any external compute service, please check with your IT, research computing, and research offices first (they may want to check out the data policies and software policies to determine fit). But for researchers looking to balance the high demand for AI compute with flat budgets and have a project to lean on, the ALCF service could represent a significant opportunity.

For questions or support, please contact ALCF Support.

https://www.alcf.anl.gov/sites/default/files/2025-12/2025-12-03-webinar.pdf

https://www.cilogon.org/news/globus-auth

https://www.anl.gov/article/argonne-expands-nations-ai-infrastructure-with-powerful-new-supercomputers

https://www.arxiv.org/pdf/2506.06363